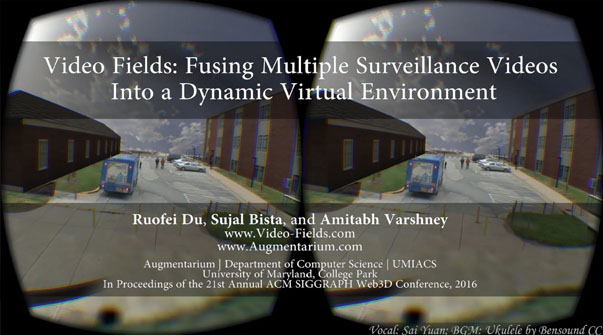

Video Fields system fuses multiple videos, camera-world matrices from a calibration interface, static 3D models, as well as satellite imagery into a novel dynamic virtual environment. Video Fields integrates automatic segmentation of moving entities during the rendering pass and achieves view-dependent rendering in two ways: early pruning and deferred pruning. Video Fields takes advantage of the WebGL and WebVR technology to achieve cross-platform compatibility across smart phones, tablets, desktops, high-resolution tiled curved displays, as well as virtual reality head-mounted displays. See the supplementary video at http://augmentarium.umd.edu and http://video-fields.com.

Publications Proceedings of the 21st International Conference on Web3D Technology (Web3D), 2016.

Keywords: virtual reality; mixed-reality; video-based rendering; projection mapping; surveillance video; WebGL; WebVR; interactive graphics

Cited By A Review of Video Surveillance Systems Journal of Visual Communication and Image Representation. Omar Elharrouss , Noor Almaadeed , and Somaya Al-Maadeed . source | cite | search An Inexpensive Upgradation of Legacy Cameras Using Software and Hardware Architecture for Monitoring and Tracking of Live Threats IEEE Access. Ume Habiba , Muhammad Awais , Milhan Khan , and Abdul Jaleel . source | cite | search Spatiotemporal Retrieval of Dynamic Video Object Trajectories in Geographical Scenes Transactions in GIS. Yujia Xie , Meizhen Wang , Xuejun Liu , Ziran Wang , Bo Mao , Feiyue Wang , and Xiaozhi Wang . source | cite | search Detection of Multicamera Pedestrian Trajectory Outliers in Geographic Scene Wireless Communications and Mobile Computing. Wei Wang , Yujia Xie , and Xiaozhi Wang . source | cite | search Multi‐camera Video Synopsis of a Geographic Scene Based on Optimal Virtual Viewpoint Transactions in GIS. Yujia Xie , Meizhen Wang , Xuejun Liu , Xing Wang , Yiguang Wu , Feiyue Wang , and Xiaozhi Wang . source | cite | search Detection of Multicamera Pedestrian Trajectory Outliers in Geographic Scene Wireless Communications and Mobile Computing. Wei Wang , Yujia Xie , and Xiaozhi Wang . source | cite | search Multi-camera Video Synopsis of a Geographic Scene Based on Optimal Virtual Viewpoint Transactions in GIS. Yujia Xie , Meizhen Wang , Xuejun Liu , Xing Wang , Yiguang Wu , Feiyue Wang , and Xiaozhi Wang . source | cite | search Multi-Level Clustering Algorithm for Pedestrian Trajectory Flow Considering Multi-Camera Information 2022 2nd International Conference on Computer Science, Electronic Information Engineering and Intelligent Control Technology (CEI). Wei Wang and Yujia Xie . source | cite | search Video\textemdashGeographic Scene Fusion Expression Based on Eye Movement Data 2021 IEEE 7th International Conference on Virtual Reality (ICVR). Xiaozhi Wang , Yujia Xie , and Xing Wang . source | cite | search Multi-Camera Light Field Capture : Synchronization, Calibration, Depth Uncertainty, and System Design 6. Elijs Dima . source | cite | search MonoMR: Synthesizing Pseudo-2.5D Mixed Reality Content From Monocular Videos Applied Sciences. Dong-Hyun Hwang and Hideki Koike . source | cite | search Feature Based Object Tracking: A Probabilistic Approach Florida Institute of Technology. Kaleb Smith . source | cite | search SIGNET: Efficient Neural Representation for Light Fields 2021 IEEE/CVF International Conference on Computer Vision (ICCV). Brandon Feng and Amitabh Varshney . source | cite | search Enabling Artificial Intelligence Analytics on the Edge London South Bank University. Vasilis Tsakanikas . source | cite | search