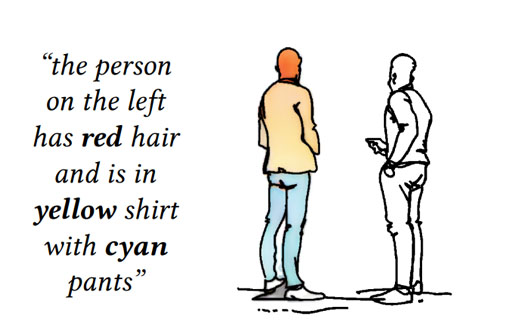

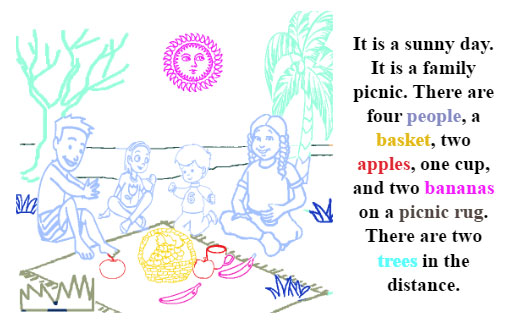

We introduce LUCSS, a language-based system for interactive colorization of scene sketches, based on their semantic understanding. LUCSS is built upon deep neural networks trained via a large-scale repository of scene sketches and cartoon-style color images with text descriptions. It consists of three sequential modules. First, given a scene sketch, the segmentation module automatically partitions an input sketch into individual object instances. Next, the captioning module generates the text description with spatial relationships based on the instance-level segmentation results. Finally, the interactive colorization module allows users to edit the caption and produce colored images based on the altered caption. Our experiments show the effectiveness of our approach and the desirability of its components to alternative choices.

Publications ACM Transactions on Graphics (SIGGRAPH Asia), 2019.

Keywords: deep neural networks; image segmentation; language-based editing; scene sketch; sketch colorization, interactive graphics, interactive perception, augmented communication

European Conference on Computer Vision (ECCV), 2018.

Keywords: sketch dataset, scene sketch, sketch segmentation, interactive graphics

Cited By Sketch-Based Creativity Support Tools Using Deep Learning Artificial Intelligence for Human Computer Interaction: A Modern Approach. Forrest Huang , Eldon Schoop , David Ha , Jeffrey Nichols , and John Canny . source | cite | search Emergent Graphical Conventions in a Visual Communication Game https://arxiv.org/pdf/2111.14210.pdf. Shuwen Qiu , Sirui Xie , Lifeng Fan , Tao Gao , Song-Chun Zhu , and Yixin Zhu . source | cite | search Write-an-Animation: High-level Text-based Animation Editing with Character-Scene Interaction Computer Graphics Forum. Jia-Qi Zhang , Xiang Xu , Zhi-Meng Shen , Ze-Huan Huang , Yang Zhao , Yan-Pei Cao , Pengfei Wan , and Miao Wang . source | cite | search One Sketch for All: One-Shot Personalized Sketch Segmentation https://arxiv.org/pdf/2112.10838.pdf. Anran Qi , Yulia Gryaditskaya , Tao Xiang , and Yi-Zhe Song . source | cite | search FS-COCO: Towards Understanding of Freehand Sketches of Common Objects in Context https://arxiv.org/pdf/2203.02113.pdf. Pinaki Nath Chowdhury , Aneeshan Sain , Yulia Gryaditskaya , Ayan Kumar Bhunia , Tao Xiang , and Yi-Zhe Song . source | cite | search Delaunay Painting: Perceptual Image Colouring From Raster Contours with Gaps Computer Graphics Forum. Amal Dev Parakkat , Pooran Memari , and Marie-Paule Cani . source | cite | search SceneSketcher-v2: Fine-Grained Scene-Level Sketch-Based Image Retrieval Using Adaptive GCNs IEEE Transactions on Image Processing. Fang Liu , Xiaoming Deng , Changqing Zou , Yu-Kun Lai , Keqi Chen , Ran Zuo , Cuixia Ma , Yong-Jin Liu , and Hongan Wang . source | cite | search A Review of Image and Video Colorization: From Analogies to Deep Learning Visual Informatics. Shu-Yu Chen , Jia-Qi Zhang , You-You Zhao , Paul L. Rosin , Yu-Kun Lai , and Lin Gao . source | cite | search Exemplar-Based Sketch Colorization with Cross-Domain Dense Semantic Correspondence Mathematics. Jinrong Cui , Haowei Zhong , Hailong Liu , and Yulu Fu . source | cite | search Neural Image Recolorization for Creative Domains 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW). Boyi Li , Serge Belongie , Ser-nam Lim , and Abe Davis . source | cite | search SketchMaker: Sketch Extraction and Reuse for Interactive Scene Sketch Composition ACM Transactions on Interactive Intelligent Systems. Fang Liu , Xiaoming Deng , Jiancheng Song , Yu-Kun Lai , Yong-Jin Liu , Hao Wang , Cuixia Ma , Shengfeng Qin , and Hongan Wang . source | cite | search Modulating Bottom-Up and Top-Down Visual Processing Via Language-Conditional Filters 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW). Ilker Kesen , Ozan Arkan Can , Erkut Erdem , Aykut Erdem , and Deniz Yuret . source | cite | search Unsupervised Scene Sketch to Photo Synthesis arXiv.2209.02834. Jiayun Wang , Sangryul Jeon , Stella X. Yu , Xi Zhang , Himanshu Arora , and Yu Lou . source | cite | search Cartoon Image Processing: A Survey International Journal of Computer Vision. Yang Zhao , Diya Ren , Yuan Chen , Wei Jia , Ronggang Wang , and Xiaoping Liu . source | cite | search DrawMon: A Distributed System for Detection of Atypical Sketch Content in Concurrent Pictionary Games Proceedings of the 30th ACM International Conference on Multimedia. Nikhil Bansal , Kartik Gupta , Kiruthika Kannan , Sivani Pentapati , and Ravi Kiran Sarvadevabhatla . source | cite | search Generating Compositional Color Representations From Text https://arxiv.org/abs/2109.10477. Paridhi Maheshwari , Nihal Jain , Praneetha Vaddamanu , Dhananjay Raut , Shraiysh Vaishay , and Vishwa Vinay . source | cite | search Painting Style-Aware Manga Colorization Based on Generative Adversarial Networks 2021 IEEE International Conference on Image Processing (ICIP). Yugo Shimizu , Ryosuke Furuta , Delong Ouyang , Yukinobu Taniguchi , Ryota Hinami , and Shonosuke Ishiwatari . source | cite | search Adversarial Segmentation Loss for Sketch Colorization 2021 IEEE International Conference on Image Processing (ICIP). Samet Hicsonmez , Nermin Samet , Emre Akbas , and Pinar Duygulu . source | cite | search Focusing on Persons 4. Xin Jin , Zhonglan Li , Ke Liu , Dongqing Zou , Xiaodong Li , Xingfan Zhu , Ziyin Zhou , Qilong Sun , and Qingyu Liu . source | cite | search Sketchy Scene Captioning: Learning Multi-level Semantic Information From Sparse Visual Scene Cues Lecture Notes in Computer Science. Lian Zhou , Yangdong Chen , and Yuejie Zhang . source | cite | search Multi-style Chinese Art Painting Generation of Flowers IET Image Processing. Feifei Fu , Jiancheng Lv , Chenwei Tang , and Mao Li . source | cite | search TediGAN: Text-Guided Diverse Face Image Generation and Manipulation Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Weihao Xia , Yujiu Yang , Jing-Hao Xue , and Baoyuan Wu . source | cite | search DanbooRegion: An Illustration Region Dataset Computer Vision –ECCV 2020. Lvmin Zhang , Yi Ji , and Chunping Liu . source | cite | search SketchyDepth: From Scene Sketches to RGB-D Images ICCV 2021. Gianluca Berardi , Samuele Salti , and Luigi Di Stefano . source | cite | search Generative Adversarial Networks\textendashEnabled Human\textendashArtificial Intelligence Collaborative Applications for Creative and Design Industries: A Systematic Review of Current Approaches and Trends Frontiers in Artificial Intelligence. Rowan T. Hughes , Liming Zhu , and Tomasz Bednarz . source | cite | search XCI-Sketch: Extraction of Color Information From Images for Generation of Colored Outlines and Sketche https://arxiv.org/abs/2108.11554. Harsh Rathod , Manisimha Varma , Parna Chowdhury , Sameer Saxena , V. Manushree , Ankita Ghosh , and Sahil Khose . source | cite | search Generating Compositional Color Representations From Text https://arxiv.org/abs/2109.10477. Paridhi Maheshwari , Nihal Jain , Praneetha Vaddamanu , Dhananjay Raut , Shraiysh Vaishay , and Vishwa Vinay . source | cite | search Text As Neural Operator:Image Manipulation by Text Instruction MM '21: Proceedings of the 29th ACM International Conference on Multimedia. Tianhao Zhang , Hung-Yu Tseng , Lu Jiang , Weilong Yang , Honglak Lee , and Irfan Essa . source | cite | search DLA-Net for FG-SBIR 5. Jiaqing Xu , Haifeng Sun , Qi Qi , Jingyu Wang , Ce Ge , Lejian Zhang , and Jianxin Liao . source | cite | search Grayscale Image Colorization Using a Convolutional Neural Network Journal of the Korean Society for Industrial and Applied Mathematics. Minje Jwa and Myungjoo Kang . source | cite | search Exploring Local Detail Perception for Scene Sketch Semantic Segmentation IEEE Transactions on Image Processing. Ce Ge , Haifeng Sun , Yi-Zhe Song , Zhanyu Ma , and Jianxin Liao . source | cite | search Partially Does It: Towards Scene-Level FG-SBIR with Partial Input arXiv.2203.14804. Pinaki Nath Chowdhury , Ayan Kumar Bhunia , Viswanatha Reddy Gajjala , Aneeshan Sain , Tao Xiang , and Yi-Zhe Song . source | cite | search ColorizeDiffusion: Adjustable Sketch Colorization with Reference Image and Text arXiv.2401.01456. Dingkun Yan , Liang Yuan , Yuma Nishioka , Issei Fujishiro , and Suguru Saito . source | cite | search OpenSketch: A Richly-annotated Dataset of Product Design Sketches ACM Transactions on Graphics. Yulia Gryaditskaya , Mark Sypesteyn , Jan Willem Hoftijzer , Sylvia Pont , Frédo Dur , and Adrien Bousseau . source | cite | search Language-based Photo Color Adjustment for Graphic Designs ACM Transactions on Graphics. Zhenwei Wang , Nanxuan Zhao , Gerhard Hancke , and Rynson W.H. Lau . source | cite | search Semi-supervised Reference-based Sketch Extraction Using a Contrastive Learning Framework ACM Transactions on Graphics. Chang Wook Seo , Amirsaman Ashtari , and Junyong Noh . website , source | cite | search Stroke-based Semantic Segmentation for Scene-level Free-hand Sketches The Visual Computer. Zhengming Zhang , Xiaoming Deng , Jinyao Li , Yukun Lai , Cuixia Ma , Yongjin Liu , and Hongan Wang . source | cite | search Deep Learning for Studying Drawing Behavior: A Review Frontiers in Psychology. Benjamin Beltzung , Marie Pel{\'{e}} , Julien P. Renoult , and C{\'{e}}dric Sueur . source | cite | search Human\textendashmachine Hybrid Intelligence for the Generation of Car Frontal Forms Advanced Engineering Informatics. Yu Wu , Lisha Ma , Xiaofang Yuan , and Qingnan Li . source | cite | search SVCNet: Scribble-based Video Colorization Network with Temporal Aggregation arXiv.2303.11591. Yuzhi Zhao , Lai-Man Po , Kangcheng Liu , Xuehui Wang , Wing-Yin Yu , Pengfei Xian , Yujia Zhang , and Mengyang Liu . source | cite | search Adversarial Interactive Cartoon Sketch Colourization with Texture Constraint and Auxiliary Auto-Encoder Computer Graphics Forum. Xiaoyu Liu , Shaoqiang Zhu , Yao Zeng , and Junsong Zhang . source | cite | search Fine-Grained Video Retrieval with Scene Sketches IEEE Transactions on Image Processing. Ran Zuo , Xiaoming Deng , Keqi Chen , Zhengming Zhang , Yu-Kun Lai , Fang Liu , Cuixia Ma , Hao Wang , Yong-Jin Liu , and Hongan Wang . source | cite | search A State-of-Art Review on~Intelligent Systems for~Drawing Assisting Lecture Notes in Computer Science. Juexiao Qin , Xiaohua Sun , and Weijian Xu . source | cite | search Improving Sketch Colorization Using Adversarial Segmentation Consistency arXiv.2301.08590. Samet Hicsonmez , Nermin Samet , Emre Akbas , and Pinar Duygulu . source | cite | search CustomSketching: Sketch Concept Extraction for Sketch-based Image Synthesis and Editing arXiv.2402.17624. Chufeng Xiao and Hongbo Fu . source | cite | search Learning Inclusion Matching for Animation Paint Bucket Colorization arXiv.2403.18342. Yuekun Dai , Shangchen Zhou , Qinyue Li , Chongyi Li , and Chen Change Loy . source | cite | search CreativeSeg: Semantic Segmentation of Creative Sketches IEEE Transactions on Image Processing. Yixiao Zheng , Kaiyue Pang , Ayan Das , Dongliang Chang , Yi-Zhe Song , and Zhanyu Ma . source | cite | search