HumanGPS: Geodesic PreServing Feature for Dense Human Correspondence

Digital Human

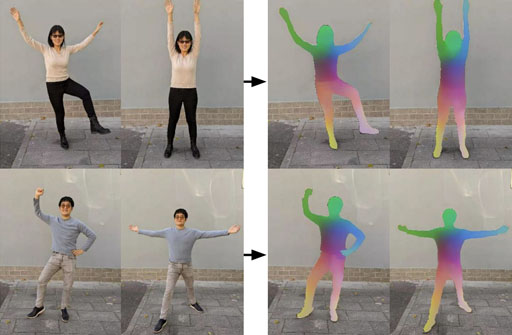

In this paper, we address the problem of building dense correspondences between human images under arbitrary camera viewpoints and body poses. Prior art either assumes small motion between frames or relies on local descriptors, which cannot handle large motion or visually ambiguous body parts, e.g., left vs. right hand. In contrast, we propose a deep learning framework that maps each pixel to a feature space, where the feature distances reflect the geodesic distances among pixels as if they were projected onto the surface of a 3D human scan. To this end, we introduce novel loss functions to push features apart according to their geodesic distances on the surface. Without any semantic annotation, the proposed embeddings automatically learn to differentiate visually similar parts and align different subjects into an unified feature space. Extensive experiments show that the learned embeddings can produce accurate correspondences between images with remarkable generalization capabilities on both intra and inter subjects.

Publications

Montage4D: Real-Time Seamless Fusion and Stylization of Multiview Video Textures

HumanGPS: Geodesic PreServing Feature for Dense Human Correspondence

Videos

Talks

Cited By

- Instant Panoramic Texture Mapping With Semantic Object Matching for Large-Scale Urban Scene Reproduction. IEEE Transactions on Visualization and Computer Graphics. Jinwoo Park, Ik-Beom Jeon, Sung-Eui Yoon, and Woontack Woo.

- Video Content Representation to Support the Hyper-Reality Experience in Virtual Reality. 2021 IEEE Virtual Reality and 3D User Interfaces (VR). Hyerim Park and Woontack Woo.

- Multi-View Neural Human Rendering. 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR). Minye Wu, Yuehao Wang, Qiang Hu, and Jingyi Yu.

- LookinGood. ACM Transactions on Graphics. Ricardo Martin-Brualla, Rohit Pandey, Shuoran Yang, Pavel Pidlypenskyi, Jonathan Taylor, Julien Valentin, Sameh Khamis, Philip Davidson, Anastasia Tkach, Peter Lincoln, Adarsh Kowdle, Christoph Rhemann, Dan B Goldman, Cem Keskin, Steve Seitz, Shahram Izadi, and Sean Fanello.

- Spatiotemporal Texture Reconstruction for Dynamic Objects Using a Single RGB-D Camera. Computer Graphics Forum. Hyomin Kim, Jungeon Kim, Hyeonseo Nam, Jaesik Park, and Seungyong Lee.

- RealityCheck. Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems. Jeremy Hartmann, Christian Holz, Eyal Ofek, and Andrew Wilson.

- The Relightables. ACM Transactions on Graphics.Kaiwen Guo, Peter Lincoln, Philip Davidson, Jay Busch, Xueming Yu, Matt Whalen, Geoff Harvey, Sergio Orts-Escolano, Rohit Pandey, Jason Dourgarian, Danhang Tang, Anastasia Tkach, Adarsh Kowdle, Emily Cooper, Mingsong Dou, Sean Fanello, Graham Fyffe, Christoph Rhemann, Jonathan Taylor, Paul Debevec, and Shahram Izadi.

- Image-Guided Neural Object Rendering. 8th International Conference on Learning Representations. Justus Thies, Michael Zollh{\"o}fer, Christian Theobalt, Marc Stamminger, and Matthias Nie{\ss}ner.

- High-Precision 5DoF Tracking and Visualization of Catheter Placement in EVD of the Brain Using AR. ACM Transactions on Computing for Healthcare.Xuetong Sun, Sarah B. Murthi, Gary Schwartzbauer, and Amitabh Varshney.

- Volumetric Capture of Humans With a Single RGBD Camera Via Semi-Parametric Learning. 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR).Rohit Pandey, Cem Keskin, Shahram Izadi, Sean Fanello, Anastasia Tkach, Shuoran Yang, Pavel Pidlypenskyi, Jonathan Taylor, Ricardo Martin-Brualla, Andrea Tagliasacchi, George Papandreou, and Philip Davidson.

- Pri3D: Can 3D Priors Help 2D Representation Learning?. https://arxiv.org/abs/2104.11225.pdf. Ji Hou, Saining Xie, Benjamin Graham, Angela Dai, and M. Nießner.